Google Search Console Not Indexing Pages? Here’s What to Fix First

JOIN THOUSANDS

of subscribers who get fresh content to help their business weekly.

of subscribers who get fresh content to help their business weekly.

You hit "publish" on a high-quality piece of content, submit your sitemap, and even manually request indexing. Yet, weeks later, you check Google Search Console (GSC) only to see that dreaded gray status: Not Indexed.

If you are wondering why Google is not indexing your pages, you are not alone. Many website owners assume this is a technical glitch or a bug. However, in modern SEO, indexing is rarely a submission problem. It is usually a quality and prioritization problem.

Before you blindly click "Request Indexing" again, you need to understand the underlying reasons why Google ignores certain URLs and how to strategically fix them.

Google doesn't index everything it finds. To make it into the search results, a page must pass a four-step evaluation process:

Before Google can index a page, it has to find it. Can Googlebot easily discover your URL through strong internal links, a clean sitemap, or external backlinks? If your page is an "orphan" (meaning no other pages link to it), discovery becomes much harder.

Once discovered, Google must be able to crawl the page. Is the page technically accessible? Is it free from server errors, and is it allowed to be crawled according to your robots.txt file?

This is where most pages fail. Google asks: Does this page offer unique, useful, and non-duplicative information? If your page is thin, overly similar to other pages on your site, or lacks real value, Google will crawl it but refuse to index it.

Does Google view your overall domain as reliable enough to spend its limited "crawl budget" and indexing resources on? Newer sites or sites with a history of spammy content often struggle to earn this initial trust.

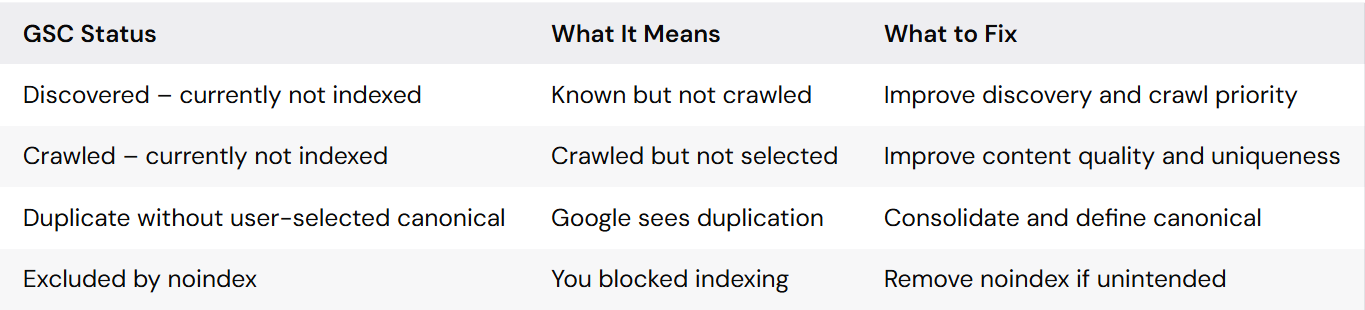

When you look at the Pages report in Google Search Console, you'll see specific reasons why pages aren't indexed. Here is a breakdown of the most common statuses and what you need to do.

This status means Google knows your URL exists (usually via a sitemap or a link), but the Googlebot hasn't actually crawled it yet. Usually, this happens because Google's crawl budget for your site was maxed out, or the page wasn't deemed important enough to crawl immediately.

How to fix it:

If you see this, Google did visit your page, read the content, and actively decided it wasn't worth putting in the search results. This is almost always a content quality issue, not a technical one.

How to fix it:

Google has found multiple URLs with the exact same (or highly similar) content and doesn't know which one to rank. This often happens with e-commerce product variants or URL parameters (e.g., ?sort=price).

How to fix it:

<link rel="canonical" href="..."/> tags to point Google to the primary version of the page.These are direct technical blocks. If a page is blocked by robots.txt, Googlebot cannot even crawl it. If it has a noindex tag, Google can crawl it but is forbidden from showing it in search results.

How to fix it:

robots.txt file to ensure you aren't disallowing important directories.When dealing with thousands of unindexed pages, do not panic and do not try to fix everything at once. Follow this prioritization hierarchy:

Before you publish your next article, run it through this quick checklist to ensure maximum indexability:

noindex tags?If these problems affect important templates or large sections of your site, a technical SEO audit can help uncover crawl waste, duplication, and indexing blockers at scale. For implementation-heavy fixes, explore our technical SEO service.

To fix a "noindex" issue, you need to remove the <meta name="robots" content="noindex"> tag from the HTML <head> of your page, or update the X-Robots-Tag in your HTTP header. If you are using WordPress, check the settings in your SEO plugin to ensure the page is set to "Index".

Google Search Console may not index a page because it hasn't discovered it yet, the page is blocked by technical directives (like robots.txt), it is a duplicate of another page, or—most commonly—the content is considered too thin or low-quality to provide value to searchers.

Start by identifying the exact error category in the "Pages" report. Technical errors (like 404s or server issues) require developer fixes or redirects. "Crawled/Discovered currently not indexed" statuses require SEO fixes, specifically improving internal linking and content quality. Always click "Validate Fix" in GSC once you've made your changes.

This status means Google successfully found and accessed your page, but it detected a "noindex" directive. Because of this tag, Google respects your command and intentionally excludes the page from search results. This is normal for admin pages or thank-you pages, but an error if it happens on your core blog posts.

Getting indexed is the foundational step of SEO. Focus on building an easily navigable site architecture and publishing genuinely helpful content, and indexing issues will largely resolve themselves.

Handpicked insights from the ProsearchLab editorial team