From Invisible to Indexed—Unblocking a B2B Robotics Brand

JOIN THOUSANDS

of subscribers who get fresh content to help their business weekly.

of subscribers who get fresh content to help their business weekly.

How fixing JavaScript rendering issues turned a "ghost site" into a lead generation engine for high-ticket industrial automation.

Our client, a manufacturer of autonomous mobile robots (AMRs) for warehouse logistics, launched a visually stunning website built on a modern JavaScript framework (React). It was fast, sleek, and offered a great user experience.

There was just one problem: To Google, the website didn't exist.

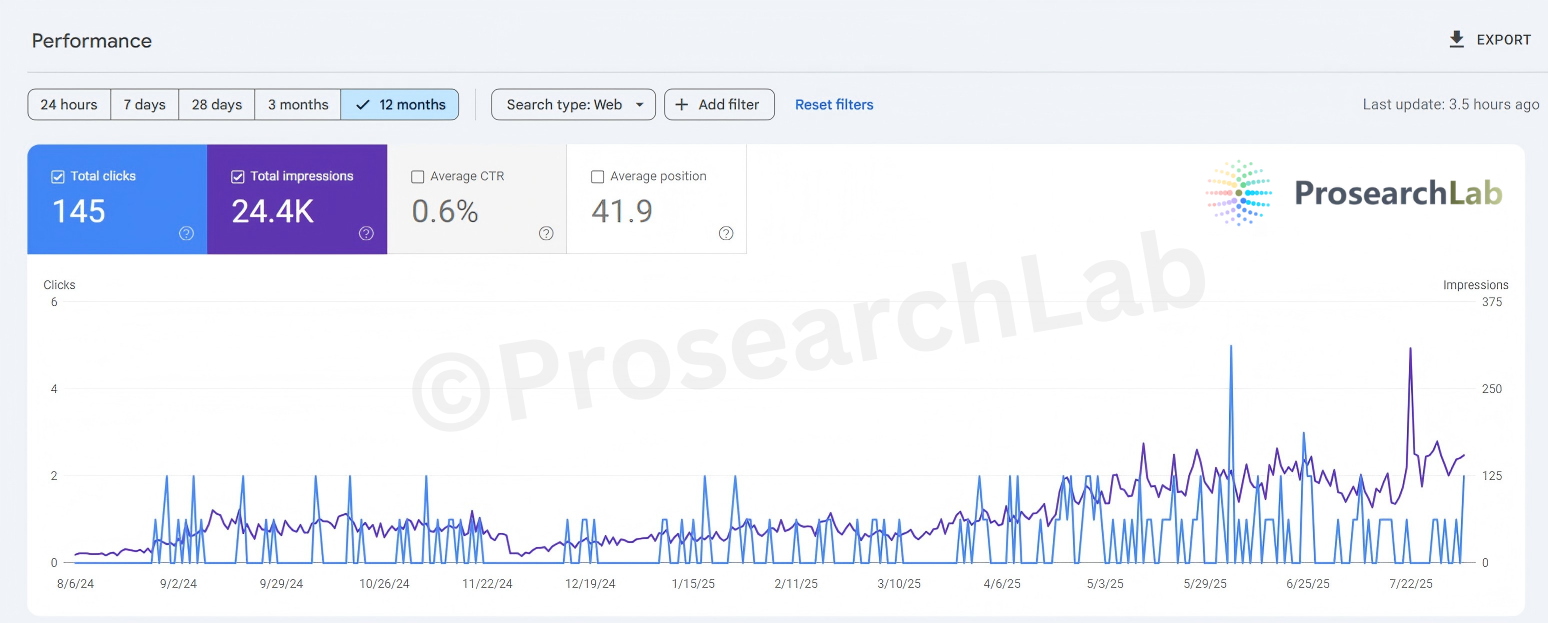

For the first six months (August to February), their Google Search Console report was a flatline. The marketing team assumed they were simply stuck in the "Google Sandbox" because it was a new domain. They kept publishing blog posts, but nothing ranked. Not even for their own brand name.

They came to ProsearchLab asking for backlinks. We told them to hold their budget—we needed to look under the hood first.

We ran a technical audit and immediately identified the culprit: Client-Side Rendering (CSR).

Because the site relied entirely on JavaScript to load content in the browser, Google's crawlers were hitting the page and seeing an empty HTML container before the content could render. The crawler didn't "wait" for the JavaScript to execute.

Effectively, the client was handing Google a book with blank pages and hoping the crawler would figure out how to use invisible ink.

Figure 1: The flatline (Aug–Feb) shows the site was invisible to Google due to JavaScript errors. The traffic spike begins immediately after our technical fix.

The sudden activity in March correlates with our technical intervention.

We didn't write a single new blog post initially. Our priority was purely technical infrastructure.

We couldn't rewrite the entire site codebase overnight. Instead, we implemented a dynamic rendering solution.

Since the internal linking structure was also JS-dependent, crawlers couldn't navigate from the homepage to product pages. We manually injected a comprehensive XML sitemap and submitted it directly to GSC to force-feed the site structure to the index.

Once the technical pipes were fixed, we pivoted the keyword strategy. We ignored high-volume terms like "Warehouse Robot" (too broad) and optimized product pages for hyper-specific engineering queries, such as:

The impact was immediate. As seen in the performance graph, indexation spiked in March, just weeks after the deployment.

While 145 total clicks might look like a rounding error to a B2C e-commerce site, in the world of industrial automation, this is a massive win.

Technical SEO is the foundation. You can have the best content in your industry, but if your rendering strategy blocks the crawler, you are speaking to an empty room.

At ProsearchLab, we don't just chase traffic graphs; we fix the technical bottlenecks that prevent business growth.

Handpicked insights from the ProsearchLab editorial team